PyTorch Lightning Hijacked: AI Supply Chain Attack

A poisoned PyTorch Lightning release stole credentials and impersonated Claude Code commits.

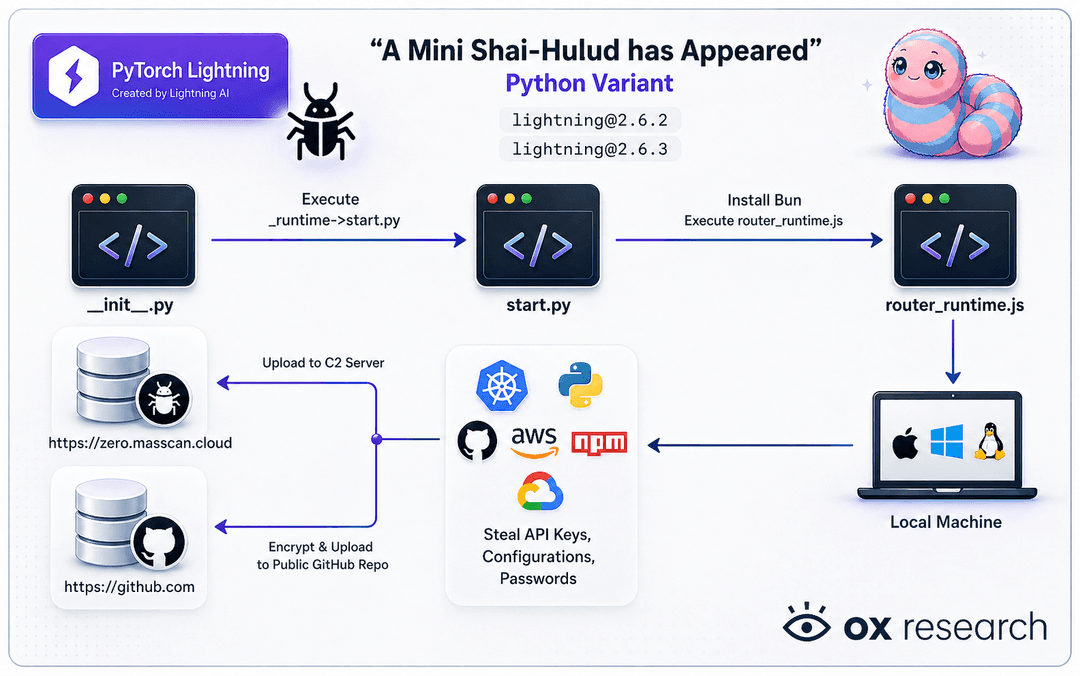

On April 30, 2026, someone slipped malware into PyTorch Lightning, one of the most widely used building blocks in AI development. Two poisoned versions went live on the public Python package registry. Together, the package gets hundreds of thousands of downloads a day. PyPI has now pulled it, but the damage is already done for teams who installed it during that window.

If your product touches AI in any way, your developers almost certainly use this library or something downstream of it.

How the attack actually works

Think of PyPI as the App Store for Python code. Developers install packages from it constantly, and they trust them the same way you trust an app from the App Store. An attacker compromised the Lightning project and uploaded two booby-trapped updates.

The moment a developer installed and ran the bad version, the malware silently stole every password, API key, and access token it could find on that machine. Then it used the stolen GitHub credentials to spread itself into other code repositories the developer had access to.

The unsettling twist: the malware signs its work as if it came from Claude Code, Anthropic's AI coding assistant. So poisoned commits look like routine AI-assisted edits the team would scroll past without a second thought.

AI libraries are the new target

The Lightning compromise is not an isolated incident. SAP-related npm packages were hit two days earlier. TanStack was brand-squatted the day before that. The same loose group of threat actors is moving from one trusted package to the next at roughly one a week.

What has changed is who they're aiming at. Supply chain attacks used to target general developer tooling. They are now hunting the libraries AI teams pull constantly: model frameworks, training pipelines, agent infrastructure. If your product is AI-shaped, you are now squarely in the target list.

Why this keeps happening

This is not an AI failure. The library did nothing wrong. The attack worked because most teams treat public package registries as automatically safe, and there's no audit step between "I want to use this library" and "this code is running with full access to our developer machines and cloud accounts."

Vibe-coded apps and AI agents pull dependencies aggressively and ship fast, which is great for speed and terrible for supply chain hygiene. One compromised package is all it takes for stolen credentials to walk straight out of your environment and into someone else's repository.

If you cannot tell us, today, every third-party package your product depends on and who controls it, you have a problem worth fixing before it becomes a headline.